bernieke

Cadet

- Joined

- Feb 17, 2013

- Messages

- 8

Hi,

After I upgraded to 12.0-U1 last week I started getting following error emails (multiple each day), all of which cleared themselves after a minute (the resilvers as well, they resilver at most a few mb in a few seconds time):

* Read SMART Error Log Failed.

* smartd is not running

* Pool zpool state is ONLINE: One or more devices has experienced an unrecoverable error. An attempt was made to correct the error. Applications are unaffected.

* Pool zpool state is ONLINE: One or more devices is currently being resilvered. The pool will continue to function, possibly in a degraded state.

* Pool zpool state is ONLINE: One or more devices has experienced an error resulting in data corruption. Applications may be affected.

And I got a few faulted/removed drives (on two occasions, each time two different drives, on the first occasion the drives got faulted, on the second they got removed):

* Pool zpool state is DEGRADED: One or more devices are faulted in response to persistent errors. Sufficient replicas exist for the pool to continue functioning in a degraded state.

* Pool zpool state is DEGRADED: One or more devices has been removed by the administrator. Sufficient replicas exist for the pool to continue functioning in a degraded state. (They had certainly not been removed by me...)

After the drives got "removed" (not really, they were still there in zpool status), I did a zpool clear to get the pool back (it was no longer accessible at this point), and noticed my jails were no longer working properly, so decided to do a full shutdown and fresh boot.

After this the machine would keep rebooting every 10 minutes or so.

Looking at the ipmi I saw the reboots were happening because of the watchdog.

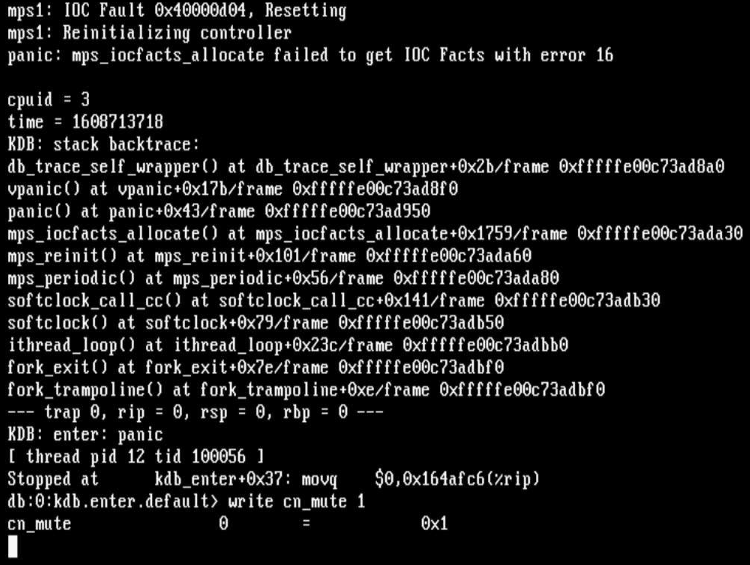

I attached the console and managed to grab a recording of following kernel panic:

During the next reboot I changed the boot environment back to 12.0-RELEASE, and the system has been running fine once more for the past hour and a half. (The reboots had been going on for 3 hours before I changed the boot environment, so it was certainly not a coincidence.)

My system consists of:

* Supermicro X9SCA with Xeon E3-1200 v2 and 32GB ECC memory

* three 9211-8i HBAs (20.00.04.00 IT firmware)

* 24 sata drives (6 12TB TOSHIBA MG07ACA1 and 18 4TB ST4000VN008, ST4000DM000, HGST HMS5C4040BL and Hitachi HUS72404)

If there's anything else I can provide to help you figure this out, please let me know.

After I upgraded to 12.0-U1 last week I started getting following error emails (multiple each day), all of which cleared themselves after a minute (the resilvers as well, they resilver at most a few mb in a few seconds time):

* Read SMART Error Log Failed.

* smartd is not running

* Pool zpool state is ONLINE: One or more devices has experienced an unrecoverable error. An attempt was made to correct the error. Applications are unaffected.

* Pool zpool state is ONLINE: One or more devices is currently being resilvered. The pool will continue to function, possibly in a degraded state.

* Pool zpool state is ONLINE: One or more devices has experienced an error resulting in data corruption. Applications may be affected.

And I got a few faulted/removed drives (on two occasions, each time two different drives, on the first occasion the drives got faulted, on the second they got removed):

* Pool zpool state is DEGRADED: One or more devices are faulted in response to persistent errors. Sufficient replicas exist for the pool to continue functioning in a degraded state.

* Pool zpool state is DEGRADED: One or more devices has been removed by the administrator. Sufficient replicas exist for the pool to continue functioning in a degraded state. (They had certainly not been removed by me...)

After the drives got "removed" (not really, they were still there in zpool status), I did a zpool clear to get the pool back (it was no longer accessible at this point), and noticed my jails were no longer working properly, so decided to do a full shutdown and fresh boot.

After this the machine would keep rebooting every 10 minutes or so.

Looking at the ipmi I saw the reboots were happening because of the watchdog.

I attached the console and managed to grab a recording of following kernel panic:

During the next reboot I changed the boot environment back to 12.0-RELEASE, and the system has been running fine once more for the past hour and a half. (The reboots had been going on for 3 hours before I changed the boot environment, so it was certainly not a coincidence.)

My system consists of:

* Supermicro X9SCA with Xeon E3-1200 v2 and 32GB ECC memory

* three 9211-8i HBAs (20.00.04.00 IT firmware)

* 24 sata drives (6 12TB TOSHIBA MG07ACA1 and 18 4TB ST4000VN008, ST4000DM000, HGST HMS5C4040BL and Hitachi HUS72404)

If there's anything else I can provide to help you figure this out, please let me know.