While I've searched, and found plenty of the same sorts of questions, I am prepared for the flaming for my ignorance, or arrogance, in thinking that my problem deserves its own post. But per forum rules specs first......

FreeNAS-9.3-STABLE-201506292130

A Super-micro chassis capable of holding 30 drives, plus a few more that were JB welded into custom made racks!

2 main pools

Athene(spelled wrong by previous network admin) 36.2TB based on a mirrored array of 4tb drives per 9 pools in combo with an SSD cache(zpool list and status to follow)

This is the array that I'm most worried about as it's degraded and if I lose the other drive in pool 7 I lose it all....

Furthermore, the web GUI shows no pools else I'd just replace the drive per this video https://www.youtube.com/watch?v=c8bvtj-LQ_A

Mercury a 1.81TB pool of SSD drives that the old network admin couldn't resist building into his custom NAS device...

The system emailed us about the degraded array per the following.....

When the web interface was examined we discovered that we had no access to our pools, so the IT-Director had me replace the drive anyway, expecting the system to automatically re-silver the array. That did not work and what little command line magic I could muster reveled the resulting error....

So the faulty drive was not properly offlined, the web interface is not showing the existing pools, and the new drive has yet to be imported into the pool correctly... However the terminal commands seem to be telling the truth. Thus, since this Freenas device is over 5 years old, and houses data for 4 clinics and over 80 VM's, my boss has decided to whip up a new NAS. In the meantime, I've decided to try and fix this array before we lose everything.

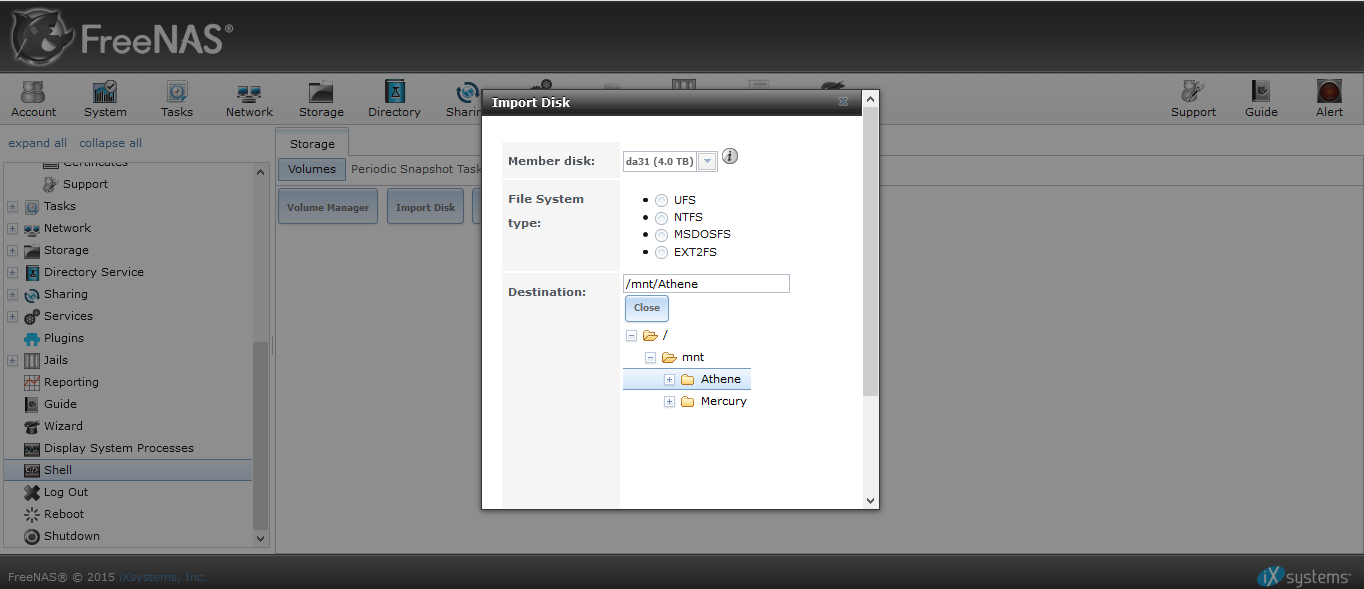

Now then, I think the web interface is working well enough to import the new drive into the athene array via storage --> import disc: See pic....

Once that's done I just need the commands to offline the now missing drive, and the command to put da31 in it's place.... At this point I have little interest in trying to fix the web interface by re-importing the active yet missing pools, as I cannot afford to take the array offline for more than a few hours..... However if the experts deem it necessary then so be it...

I am also aware that some some glabel trickery may be necessary as a dev/gptid seems to be a zpool thing and not so much a BSD device name thing..

2463650246752463858 UNAVAIL 0 0 0 was /dev/gptid/91725279-482c-11e5-99e5-0cc47a18b26c

Thus, I throw myself upon the mercy of the BSD zpool CLI Gods... May they have mercy...

Regards

HEX

FreeNAS-9.3-STABLE-201506292130

A Super-micro chassis capable of holding 30 drives, plus a few more that were JB welded into custom made racks!

| Platform | Intel(R) Xeon(R) CPU E5-2609 v2 @ 2.50GHz |

|---|---|

| Memory | 131011MB |

2 main pools

Athene(spelled wrong by previous network admin) 36.2TB based on a mirrored array of 4tb drives per 9 pools in combo with an SSD cache(zpool list and status to follow)

This is the array that I'm most worried about as it's degraded and if I lose the other drive in pool 7 I lose it all....

Furthermore, the web GUI shows no pools else I'd just replace the drive per this video https://www.youtube.com/watch?v=c8bvtj-LQ_A

Mercury a 1.81TB pool of SSD drives that the old network admin couldn't resist building into his custom NAS device...

The system emailed us about the degraded array per the following.....

Code:

Checking status of zfs pools:

NAME SIZE ALLOC FREE EXPANDSZ FRAG CAP DEDUP HEALTH ALTROOT

Athene 36.2T 16.0T 20.3T - 54% 44% 1.00x DEGRADED /mnt

Mercury 1.81T 629G 1.20T - 46% 33% 1.00x ONLINE /mnt

freenas-boot 15.5G 1.07G 14.4G - - 6% 1.00x ONLINE -

pool: Athene

state: DEGRADED

status: One or more devices are faulted in response to persistent errors.

Sufficient replicas exist for the pool to continue functioning in a

degraded state.

action: Replace the faulted device, or use 'zpool clear' to mark the device

repaired.

scan: scrub repaired 0 in 24h36m with 0 errors on Mon Jan 18 00:56:38 2021

config:

NAME STATE READ WRITE CKSUM

Athene DEGRADED 0 0 0

mirror-0 ONLINE 0 0 0

gptid/e17f9a3b-bf8b-11e9-961c-0cc47a18b26c ONLINE 0 0 0

gptid/8ba5b933-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

mirror-1 ONLINE 0 0 0

gptid/8c1189e4-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

gptid/8c81e63a-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

mirror-2 ONLINE 0 0 0

gptid/8cf3ded2-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

gptid/8d60e0c1-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

mirror-3 ONLINE 0 0 0

gptid/8dd67a9f-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

gptid/8e4a7a85-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

mirror-4 ONLINE 0 0 0

gptid/8ec2063a-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

gptid/8f3a9a82-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

mirror-5 ONLINE 0 0 0

gptid/8fac2a90-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

gptid/901e01d0-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

mirror-6 ONLINE 0 0 0

gptid/908e55cc-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

gptid/90fd58a5-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

mirror-7 DEGRADED 0 0 0

gptid/91725279-482c-11e5-99e5-0cc47a18b26c FAULTED 5 448 0 too many errors

gptid/91e8270b-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

mirror-8 ONLINE 0 0 0

gptid/925ede81-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

gptid/92d5d7ac-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

mirror-9 ONLINE 0 0 0

gptid/9354b223-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

gptid/93c75f2d-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

logs

mirror-10 ONLINE 0 0 0

gptid/95fa1e36-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

gptid/9646a173-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

cache

gptid/943385e6-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

gptid/94a5871c-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

gptid/9516c330-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

gptid/9589c284-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

errors: No known data errors

-- End of daily output --

When the web interface was examined we discovered that we had no access to our pools, so the IT-Director had me replace the drive anyway, expecting the system to automatically re-silver the array. That did not work and what little command line magic I could muster reveled the resulting error....

Code:

Zpool status Athene

action: Attach the missing device and online it using 'zpool online'.

see: http://illumos.org/msg/ZFS-8000-2Q

scan: scrub repaired 0 in 24h36m with 0 errors on Mon Jan 18 00:56:38 2021

config:

NAME STATE READ WRITE CKSUM

Athene DEGRADED 0 0 0

mirror-0 ONLINE 0 0 0

gptid/e17f9a3b-bf8b-11e9-961c-0cc47a18b26c ONLINE 0 0 0

gptid/8ba5b933-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

mirror-1 ONLINE 0 0 0

gptid/8c1189e4-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

gptid/8c81e63a-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

mirror-2 ONLINE 0 0 0

gptid/8cf3ded2-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

gptid/8d60e0c1-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

mirror-3 ONLINE 0 0 0

gptid/8dd67a9f-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

gptid/8e4a7a85-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

mirror-4 ONLINE 0 0 0

gptid/8ec2063a-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

gptid/8f3a9a82-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

mirror-5 ONLINE 0 0 0

gptid/8fac2a90-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

gptid/901e01d0-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

mirror-6 ONLINE 0 0 0

gptid/908e55cc-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

gptid/90fd58a5-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

mirror-7 DEGRADED 0 0 0

2463650246752463858 UNAVAIL 0 0 0 was /dev/gptid/91725279-482c-11e5-99e5-0cc47a18b26c

gptid/91e8270b-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

mirror-8 ONLINE 0 0 0

gptid/925ede81-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

gptid/92d5d7ac-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

mirror-9 ONLINE 0 0 0

gptid/9354b223-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

gptid/93c75f2d-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

logs

mirror-10 ONLINE 0 0 0

gptid/95fa1e36-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

gptid/9646a173-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

cache

gptid/943385e6-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

gptid/94a5871c-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

gptid/9516c330-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

gptid/9589c284-482c-11e5-99e5-0cc47a18b26c ONLINE 0 0 0

errors: No known data errors

So the faulty drive was not properly offlined, the web interface is not showing the existing pools, and the new drive has yet to be imported into the pool correctly... However the terminal commands seem to be telling the truth. Thus, since this Freenas device is over 5 years old, and houses data for 4 clinics and over 80 VM's, my boss has decided to whip up a new NAS. In the meantime, I've decided to try and fix this array before we lose everything.

Now then, I think the web interface is working well enough to import the new drive into the athene array via storage --> import disc: See pic....

Once that's done I just need the commands to offline the now missing drive, and the command to put da31 in it's place.... At this point I have little interest in trying to fix the web interface by re-importing the active yet missing pools, as I cannot afford to take the array offline for more than a few hours..... However if the experts deem it necessary then so be it...

I am also aware that some some glabel trickery may be necessary as a dev/gptid seems to be a zpool thing and not so much a BSD device name thing..

2463650246752463858 UNAVAIL 0 0 0 was /dev/gptid/91725279-482c-11e5-99e5-0cc47a18b26c

Thus, I throw myself upon the mercy of the BSD zpool CLI Gods... May they have mercy...

Regards

HEX